Tested by accident

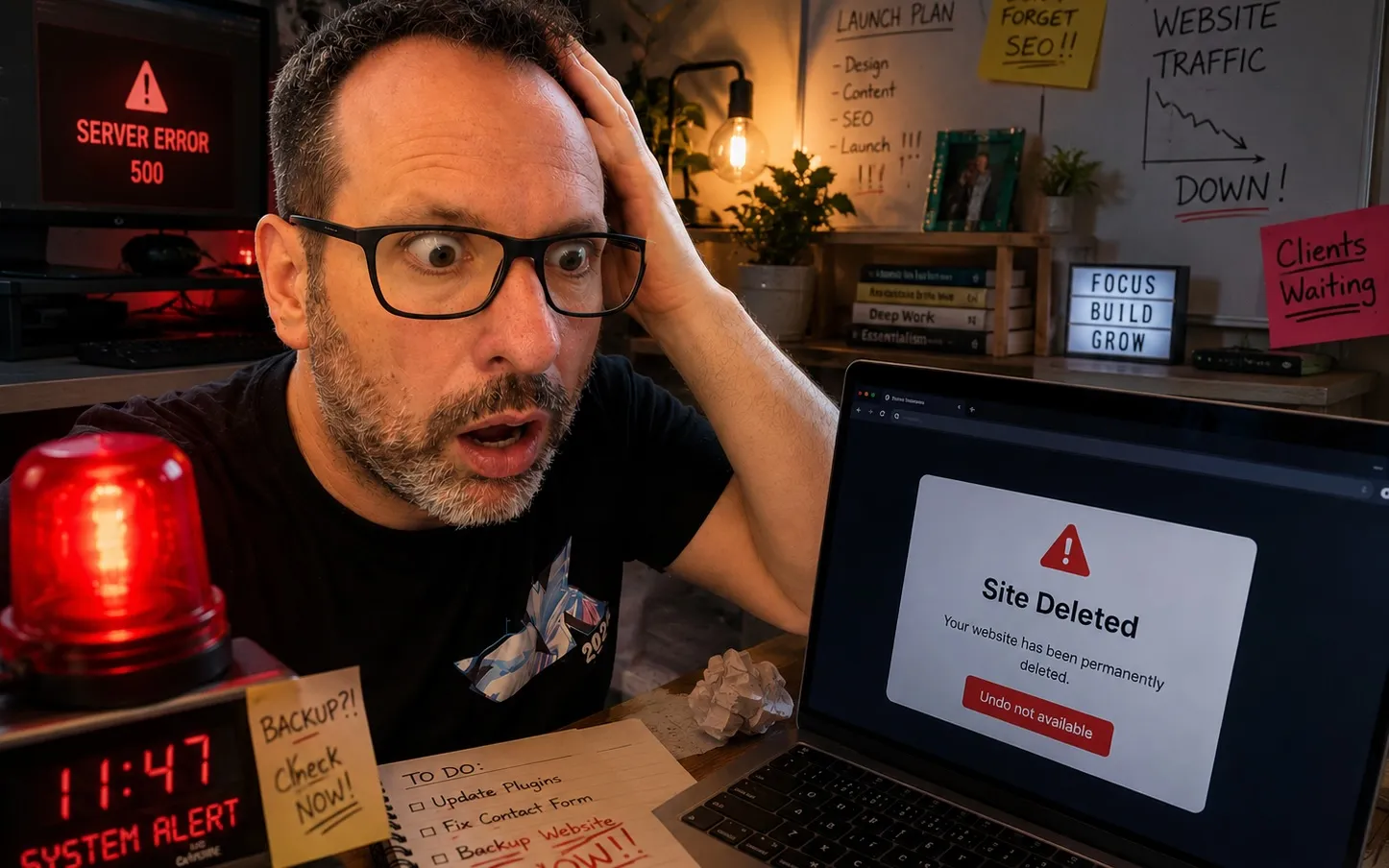

I accidentally deleted my website this weekend. The forced rebuild was the first real-pressure test of the standards-first experiment I wrote about a few days ago. The standards held — and revealed what is still unfinished.

I accidentally deleted my website this weekend. I’d love to tell you it was AI’s fault. It wasn’t — it was 100% me, misunderstanding something in my web host’s UI while in a bullish mood.

Three days ago I published a post saying AI slop is a standards problem — that if you give AI a written standard to reach for, it will. I’d been quietly running that experiment on a personal site rebuild that was nowhere near ready. I closed that post saying ask me in six months.

The universe asked me three days later.

Two options: restore the WordPress site I’d just deleted, or skip ahead and rebuild on the half-built apparatus from the slop post. The deleted site was a 2020 template I’d been mildly unhappy with for years and had been planning to redo later in the year anyway. The deletion just brought the timeline forward.

I rebuilt.

AI didn’t break the site. I did. AI helped me rebuild it.

The 36 hours

Mother’s Day in the middle. Sleep. The rest at the keyboard, mostly with Claude. What landed, on the measurable side:

- Lighthouse 100 across performance, accessibility, best practices, SEO.

- A Content Security Policy (CSP) as strict as I could make it: no inline scripts, no inline styles, no third-party origins loading on render.

Both of those are levels of quality I’d probably have struggled with, or just not thought of, doing this on my own.

Beyond the metrics, the rest of the site got pulled together for the first time. The Medium archive came home along with older pieces salvaged from the deleted site. Talks going back to 2012 sit alongside the writing instead of scattered across YouTube, with topics pages tying them together by subject. Underneath all of that, a lightweight design system — tokens, type scale, self-hosted webfonts — gets the look closer to the magazine-y editorial feel I’ve been wanting since the 2020 build.

A lot of this work felt less like restoration and more like remastering: pieces I hadn’t reread in years given a better home than they’d had originally. None of it would have moved at this pace without the standards layer underneath.

Where the standards pushed back

The standards earned their keep in three different ways the same weekend.

Shiki vs the CSP. I dropped Shiki in for syntax highlighting on code blocks. The CSP gate failed: Shiki paints colour by inlining style="..." attributes on every span, and my CSP doesn’t allow inline styles. The standards forced a choice — drop Shiki, or weaken the policy. I dropped Shiki. The replacement is a plain CodeBlock component: uglier, lighter, in policy.

Web fonts via Google. I’d assumed I’d grab Inter and Playfair Display from Google Fonts — a <link> tag in the head, done in thirty seconds. The privacy rule said no third-party origins on render, and a CDN font is exactly that. Same shape of choice as Shiki: lose the typography, or find a way to keep it in policy. I self-hosted both.

A fair question to ask at this point: why care about that level of strictness — strict CSP, no CDN fonts, no third-party loads of any kind — on a personal blog? I had to ask myself the same thing. There’s no auth here, no user input, no comments. The active threat surface is small.

The honest answer isn’t really about attackers. It’s about future me. The privacy rule on this site is no third-party origins load on render — that’s the promise to readers. Strict CSP is what makes the promise enforceable rather than aspirational. Without it, six months from now I quietly drop in a “subscribe” iframe or a Medium oEmbed widget; the page still ships, and the rule is broken without anyone noticing. With it, the build fails and I have to consciously weaken the policy to ship — which is exactly the kind of decision I want to be made deliberately, not by accident.

The security level isn’t the point. The forcing function is.

Video embeds. I wanted those old talks on the site. The default move is dropping in a YouTube <iframe>. The privacy rule said no third-party loads on render. So Claude proposed a facade pattern: render a self-hosted thumbnail, only inject the iframe when the user actually clicks play, point at youtube-nocookie.com so nothing is set until interaction. It’s now the standard for any future video embed.

Twice the standards said no. Once they said go this way instead.

The auditors I didn’t plan for

The plan in the slop post was: standards docs, deterministic checks, and a small set of advisory AI reviewers scoped to individual diffs. A post reviewer. A code reviewer. Each looking at a single change.

That was the model going into the rebuild. Working faster than I’d expected, I started feeling something the diff-scoped reviewers couldn’t see: drift across the whole repo. Was the design system actually being used, or had I quietly grown a parallel set of CSS rules elsewhere? Were the docs still describing the code, or had they fallen behind?

Two more agents emerged mid-rebuild, both site-wide. An architecture auditor that walks the repo and reports drift across docs, decisions, and abstractions. A design auditor that compares rendered screenshots against the design doc. Different verdict ladder from the per-PR reviewers — HEALTHY / DRIFTING / STALE, not SHIP / NEEDS WORK — because the question is direction, not merge readiness.

Neither was in the slop-post plan. I felt the gap during the 36 hours and built them on the spot. Which is the same lesson that post argued for code, applied to the standards apparatus itself: ship something, use it for real, see where it bends, harden the bend.

Forking the conversation

Apparatus or not, AI still misses things. Mid-rebuild I noticed the production site was throwing console errors about CSP violations. The deterministic gates hadn’t caught it — the headers check validated that the directives were set, not whether the built HTML actually complied with them. A real slip.

The move that worked: fork the Claude conversation. One branch keeps building. The other does the post-mortem — what was the gap, why did it slip through, what’s the rule that would have caught it earlier. The post-mortem branch’s output became a new gate (check:csp, scanning built HTML for inline content the CSP would block). The building branch resumed, with one more thing it couldn’t accidentally do.

Two parallel modes for two different jobs: making, and learning what should have stopped the making. Trying to do both in one conversation is how things get muddled.

What’s still unfinished

The slop post ended on a line I still believe: set the standard, the AI will help you reach it. This weekend was the first time I’d tested that under pressure. The standards held. The site I ended up with is better than what I’d have built on my own — both aesthetically and technically — and it came together far quicker than I could have managed solo.

What’s unfinished is something I’m still trying to understand. Working with AI well isn’t a single mode — it’s a sequence of distinct stances, each with its own target. Planning isn’t standard-setting. Building isn’t auditing. The cost of being in the wrong one is real, and AI doesn’t tell you which one you’re in.

I’ve got the standards. The modes are the next thing.